Photography of a tree with a heart, showcasing a whimsical, twisted tree trunk forming a perfect heartshaped void, set against a dreamy, ethereal forest backdrop...

shot in fe gm 35mm f1.4 of asian female model, doe eyes,

A majestic Goddes of beauty, charming dressed in a regal, jeweled gown and ornate crown, her golden hair cascading down her back...

Jabberwock, hyperrealistic, photorealistic, high details, high quality, shot on Nikon D6, Galen Rowell, Peter Lik, Marc Adamus, David Muench.

pirate character portrait gray hair weathered face thick beard weathered face colorful headband patched clothing and ocean for the background

Smoked cheese hamburger with spicy tomato sauce., Editorial Photography, Photography, Shot on 70mm lens...

strawberries splashing, swirling liquid, realism, octane render, raytracing

A magazine quality photograph of the sky filled with lightning and starting to show the first light of dawn. No ground in the photo.

photo of Princess of Persia, beauty, wallpapers, in the style of light maroon and azure, wandering eye, oriental, portrait, hurufiyya, darkly romantic realism...

A highly realistic, closeup photograph of a beautiful 35 year old redread woman writing in her journal, sitting on her balcony wearing warm, stylish outfits. Shot on a Canon...

artificial intelligence, revolution, publishing, writer, hyperrealistic

photo realistic beautiful young gothic woman

groow cannabis, photo réal 4K

female character fantasy world, for fantasy story, protagonist, interesting and detailed clothes, beautiful, medieval fantasy cinematic shot photo taken by canon, photo taken by fuji...

an intellectual brunette girl, normal looking, portrait style, 25 years, fancy dress, a party in the background, beautiful eyes...

Lets express the bright hope of a sprout that bloomed after enduring for a long time in a barren soil

young blue dragon with horn lightning in the style of dd fantasy full body

ychedelic parrot looking at the camera fine art painting, in the style of fluid lines, 8k resolution...

youtube banner environment nature, no writing, fantasy, islam

tshirt vector, car in city graphic, synthwave, vivid colors, detailed

Portrait of an asian woman. She has pink violet hair style with modern complex hairdressing...

dragon dnd epic, full body, ultra wide angle, incredible detail, epic pose, in the style of Craig Mullins Realistic face, realistic hands, full body, hyper detailed dynamic scene...

Willem Dafoe is the only Jesus Christ Ill ever need

ghost of dragon, art style of kazuki takahashi,

hedgehog face, floating in space, wearing space suit no helmet, cinematic, 50mm f1.8, unreal engine

frozen heart broken neon

Maple tree in a bottle, elixir, the last dance of the sun bends into your palms the autumn light shines, the healing power of the Maple

silhouette of tree against the starry night, in the style of intense use of light and shadow, stockphoto, sharpprickly, rounded, kintsukuroi, sunrays shine upon it, coastal scenery

Capture a surreal portrait of a mythical creature against a bright yellow background. Dress them in a futuristic outfit with textural elements...

A pig dressed as a mason, by Bill Gekas

a cute and funny dragon giving a rose to the viewer cartoon style Pixar 3D

the witch Larina Nix

Abstract

We present Liquid, an auto-regressive generation paradigm that seamlessly integrates visual comprehension and generation by tokenizing images into discrete codes and learning these code embeddings alongside text tokens within a shared feature space for both vision and language. Unlike previous multimodal large language model (MLLM), Liquid achieves this integration using a single large language model (LLM), eliminating the need for external pretrained visual embeddings such as CLIP. For the first time, Liquid uncovers a scaling law that performance drop unavoidably brought by the unified training of visual and language tasks diminishes as the model size increases. Furthermore, the unified token space enables visual generation and comprehension tasks to mutually enhance each other, effectively removing the typical interference seen in earlier models. We show that existing LLMs can serve as strong foundations for Liquid, saving 100× in training costs while outperforming Chameleon in multimodal capabilities and maintaining language performance comparable to mainstream LLMs like LLAMA2. Liquid also outperforms models like SD v2.1 and SD-XL (FID of 5.47 on MJHQ-30K), excelling in both vision-language and text-only tasks. This work demonstrates that LLMs such as Qwen2.5 and GEMMA2 are powerful multimodal generators, offering a scalable solution for enhancing both vision-language understanding and generation.

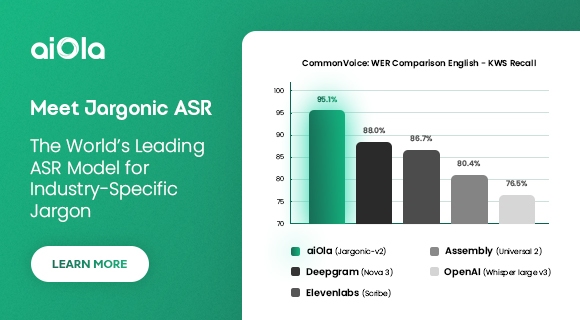

How Well Do LLMs Perform in Visual Generation?

Compare with other auto-regressive based methods, Liquid achieves a better overall score on GenAI-Bench under both basic prompts and advanced prompts. This suggests that the images generated by Liquid align better semantically with the input text prompts. On MJHQ-30K, Liquid not only has a lower FID than all other auto-regressive methods but also surpasses most well-known diffusion models, indicating that LLMs are also capable of generating high-quality images.

Will Understanding and Generation Tasks Mutually Improve Each Other ?

To answer this question, we conduct three sets of experiments. In the first set, we used a combination of 10M text-only data, 10M visual generation data, and 10M visual understanding data, resulting in a total of 30M data for the pre-training phase. The experiment with a 1 : 1 : 1 data ratio of the three tasks serves as the baseline. Building on this baseline, we separately add an additional 10M visual generation data and additional 10M visual understanding data, forming a total of 40M data with data ratios of 1 : 2 : 1 and 1 : 1 : 2, respectively, labeled as "Add T2I" and "Add I2T".

“Visual Gen." refers to the data used for training text-to-image generation, while “Visual Und." refers to the data used for visual understanding. Compared to the baseline, adding more visual understanding data enhances the visual generation capability, improving the semantic consistency between the generated content and the prompt. Conversely, increasing the visual generation data similarly aids in enhancing the model's visual understanding ability. This indicates that when the tokens for visual generation and understanding are unified, they share a common optimization objective and can mutually enhance each other.

Will Multimodal Generation Follow the Scaling Laws?

We explore the visual generation performance of LLMs ranging from 0.5B to 32B in size after mixed training with language data and text-to-image data. As shown in figure belows, with the increase in model size and training iterations, the validation loss smoothly decreases, while token accuracy and VQA Score consistently increase.

Larger models eventually obtain more robust visual generation outcomes. Samples are drawn from Liquid models of 4 different sizes (0.5B, 1B, 2B, 9B) and 3 different training steps (5K, 15K, 40K).

Does Visual Generation Compromise Language Ability?

To validate whether acquiring image understanding and generation capabilities has any impact on the original language abilities of the LLMs, we report overall zero-shot performance across a suite of popular benchmarks.

Liquid can largely retain the language ability compared with text-only training.

Multi-modal mixed training does impact language performance when the model size

is small. However, this degradation gradually disappears as the model size increases.

Liquid can largely retain the language ability compared with text-only training.

Multi-modal mixed training does impact language performance when the model size

is small. However, this degradation gradually disappears as the model size increases.

Does Language Ability Limit Visual Generation Performance?

Mix training results in higher validation loss on visual generation tasks for models of every size. However, its impact on the VQA Score diminishes as the size of the model increases.

English (US) ·

English (US) ·