1HKUST

†Corresponding authors

Abstract

Audio and music generation have emerged as crucial tasks in many applications, yet existing approaches face significant limitations: they operate in isolation without unified capabilities across modalities, suffer from scarce high-quality, multi-modal training data, and struggle to effectively integrate diverse inputs. In this work, we propose AudioX, a unified Diffusion Transformer model for Anything-to-Audio and Music Generation. Unlike previous domain-specific models, AudioX can generate both general audio and music with high quality, while offering flexible natural language control and seamless processing of various modalities including text, video, image, music, and audio. Its key innovation is a multi-modal masked training strategy that masks inputs across modalities and forces the model to learn from masked inputs, yielding robust and unified cross-modal representations. To address data scarcity, we curate two comprehensive datasets: vggsound-caps with 190K audio captions based on the VGGSound dataset, and V2M-caps with 6 million music captions derived from the V2M dataset. Extensive experiments demonstrate that AudioX not only matches or outperforms state-of-the-art specialized models, but also offers remarkable versatility in handling diverse input modalities and generation tasks within a unified architecture.

Text-to-Audio Generation

Prompt:

Thunder and rain during a sad piano solo

Prompt:

Typing on a keyboard

Prompt:

Ocean waves crashing

Prompt: A person is snoring

Prompt: A toilet flushing

Prompt: Rain falling on a rooftop

Prompt: An airplane is taking flight

Prompt: An explosion and crackling

Prompt: Footsteps in snow

Prompt: A cat meowing repeatedly

Prompt: Food and oil sizzling

Text-to-Music Generation

Prompt:

Orchestral, epic, with drums, strings,

and brass

Prompt:

Electronic dance music with synthesizers, bass, drums, and a slow build-up

Prompt:

Sad emotional soundtrack with ambient textures and solo cello

Prompt: A suspenseful scene in a haunted mansion

Prompt: An orchestral music piece for a fantasy world

Prompt: Uplifting ukulele tune for a travel vlog

Prompt: Romantic acoustic guitar music for a sunset scene

Prompt: Smooth urban R&B beat with a mellow groove

Prompt: Produce upbeat electronic music for a dance party

Prompt: Playful 8-bit chiptune music for a retro platformer game

Prompt: Ambient synth music in a deep space setting

Video-to-Audio Generation

Video-to-Music Generation

Teaser

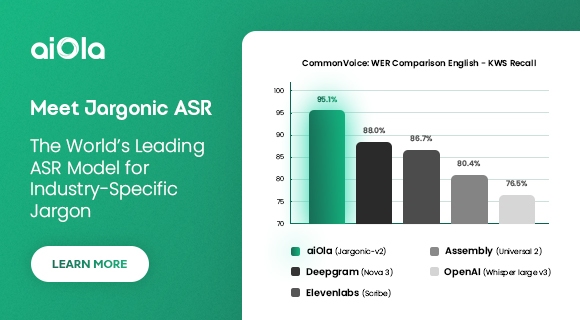

(a) Overview of AudioX, illustrating its capabilities across various tasks. (b) Radar chart comparing the performance of different methods across multiple benchmarks. AudioX demonstrates superior Inception Scores (IS) across a diverse set of datasets in audio and music generation tasks.

Method

The AudioX Framework.

BibTeX

If you find our work useful, please consider citing:

@article{tian2025audiox, title={AudioX: Diffusion Transformer for Anything-to-Audio Generation}, author={Tian, Zeyue and Jin, Yizhu and Liu, Zhaoyang and Yuan, Ruibin and Tan, Xu and Chen, Qifeng and Xue, Wei and Guo, Yike}, journal={arXiv preprint arXiv:2503.10522}, year={2025} }

English (US) ·

English (US) ·